Teaching A Computer What I Look Like

by Joseph Waine 3/14/2026

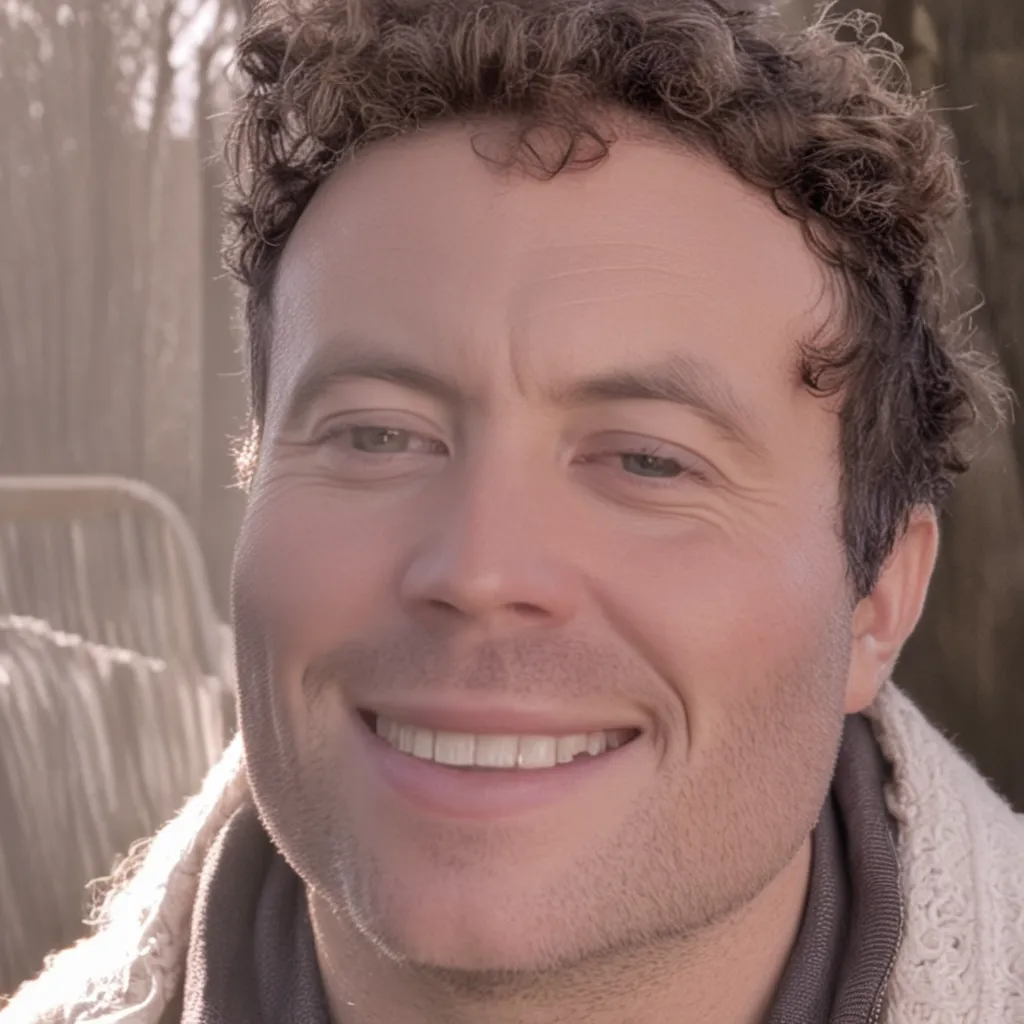

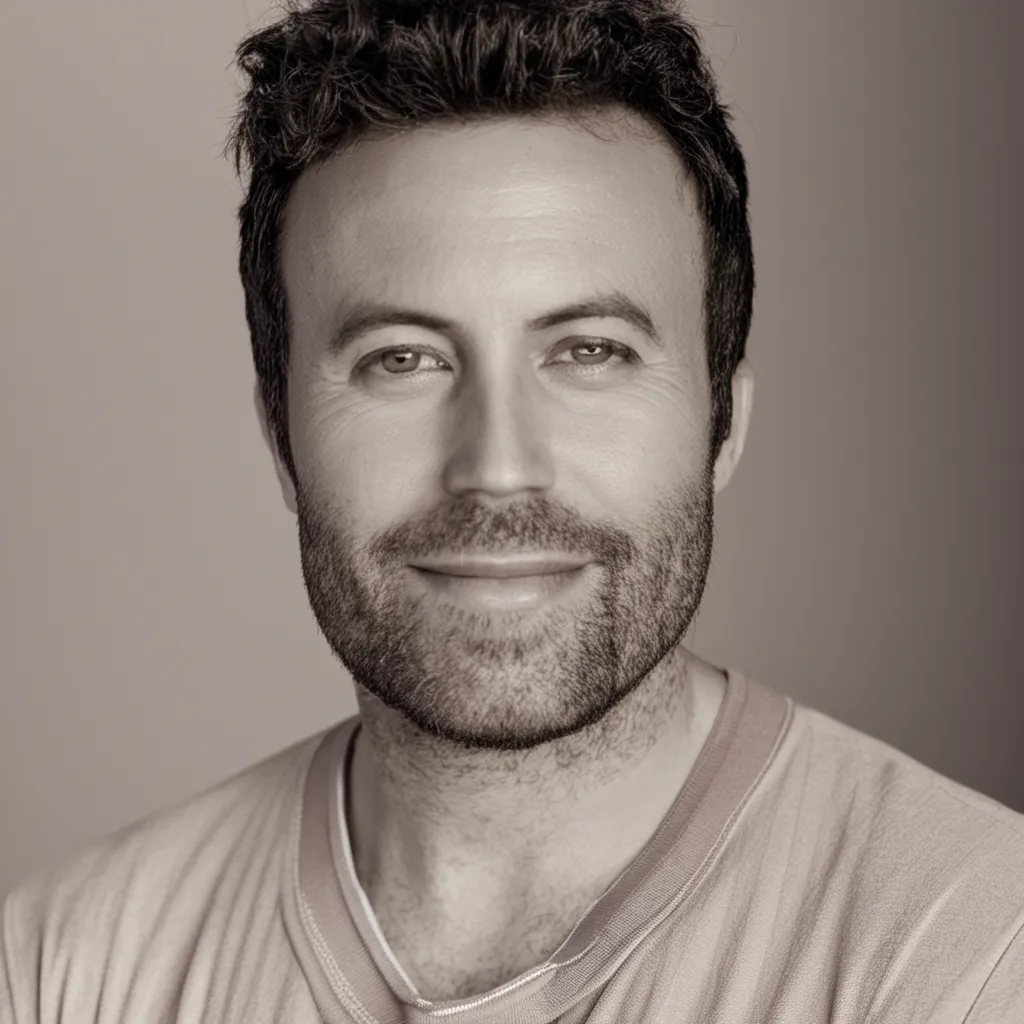

This week I learned how to train models. I started out with a text prediction model, coded up a little transcriber with some open source tools, then narrowed my focus to image generation. To keep it even more specific and endlessly fascinating (to me), I gave a computer 39 photos of my face from the past two years. I've had a few different hair colors and a range of moods (mostly happy or neutral :)), and I turned 39 this year hence the 39.

my training data:

I used DreamBooth and LoRA (two fine tuning techniques) to learn what I look like followed by Stable Diffusion (an open-source text to image model) to generate images of "me" that previously didn't exist. I'm fascinated that this can be done, but it's truly illuminating what happens when parameters are dialed up and down.

Teaching the model my face.

DreamBooth and LoRA already know what faces are, since they were trained on millions of images — they understand facial features, lighting and shadows. I showed it the 39 examples and explained that "when I say sks (a unique token to reference me), I mean this person." After about 20 minutes of training on a powerful cloud GPU hosted by Modal, it learned the association, making a prompt commanding to generate a photo of sks person, creating a version of me.

This is what is referred to as "fine tuning" - I used a model with broad knowledge then taught it something specific. Like hiring a sculptor and showing them me until they can create a statue of me from memory :)

The temperature dial

I was drawn to the "temperature" parameter, the setting which determines how predictable or chaotic the product of a model is. All AI models have this temperature setting, whether it generates text, images, or music.

At temperature 0, the model makes the safest, most predictable choice every time. It picks whatever it's most confident about and doesn't deviate. The result is technically correct but... stiff.

Turn the temperature up and the model starts taking risks. The outputs get more varied, more surprising, sometimes more interesting — and sometimes more wrong.

In a text prediction model, a low temperature phrase would be something like "the sky is blue and clear today, with no clouds in sight", whereas a high temperature phrase would be something like "the sky is melting cathedral bones, a neon choir humming sideways through the fiery teeth of tuesday."

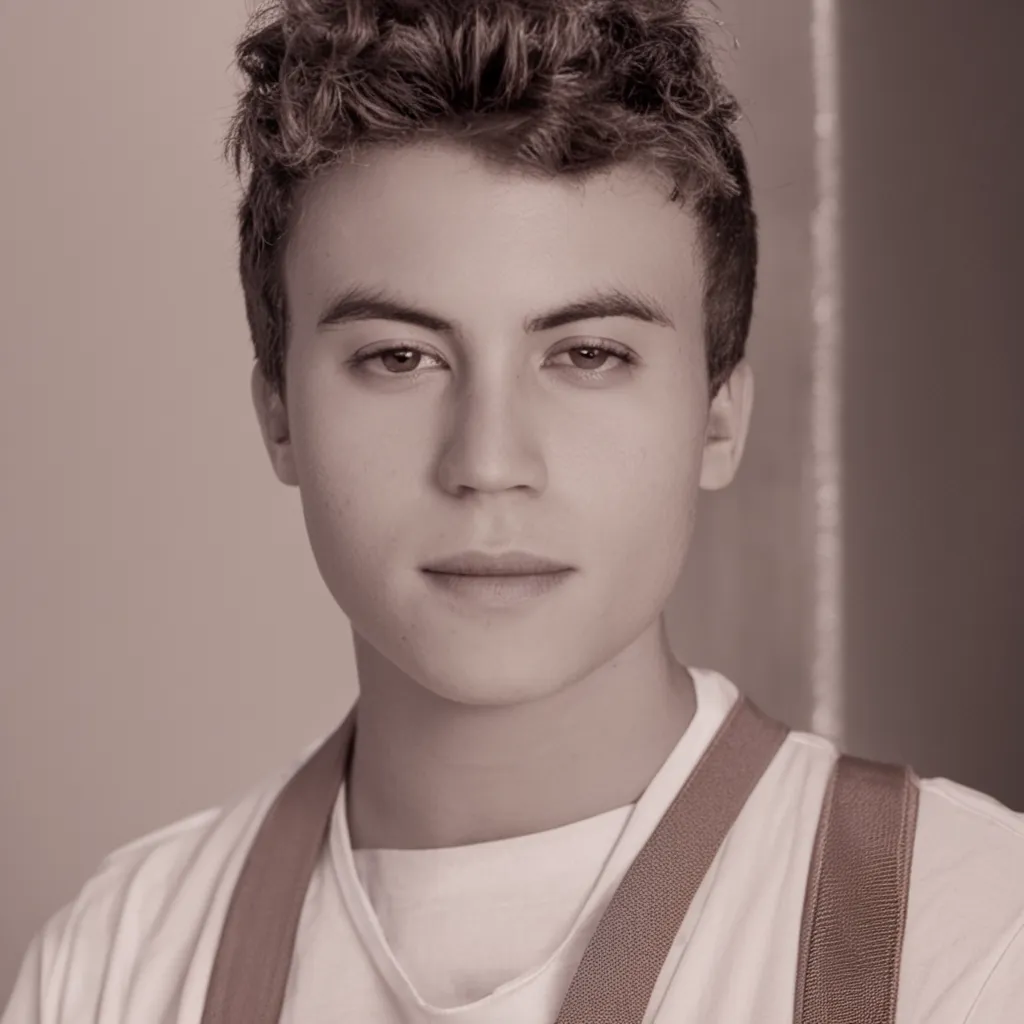

It's pretty funny how the personality of the 0.0 version of me resembles a more solid dependable fellow that likes his exercise and makes sure his jaw is strong with the help of some mastic gum. The 1.0 version of 'me' is a bit more of a blurry chaos wizard, a time traveling artist type from an ambiguous age. I can see many great qualities in both versions of me.

How the model learns over time

At different levels of training, the model goes from generic to specific, so as you can see below, the model at zero strength generates a default person for this prompt. Since in some of my source images I have bleached blonde hair (and in this round I never specified that I am a man), the blonde color is associated with women, so the zero strength model is a blonde haired woman that looks nothing like me. As you can see, the fully trained model generates something a little more convincing :)

from untrained to fully trained

Prompt power

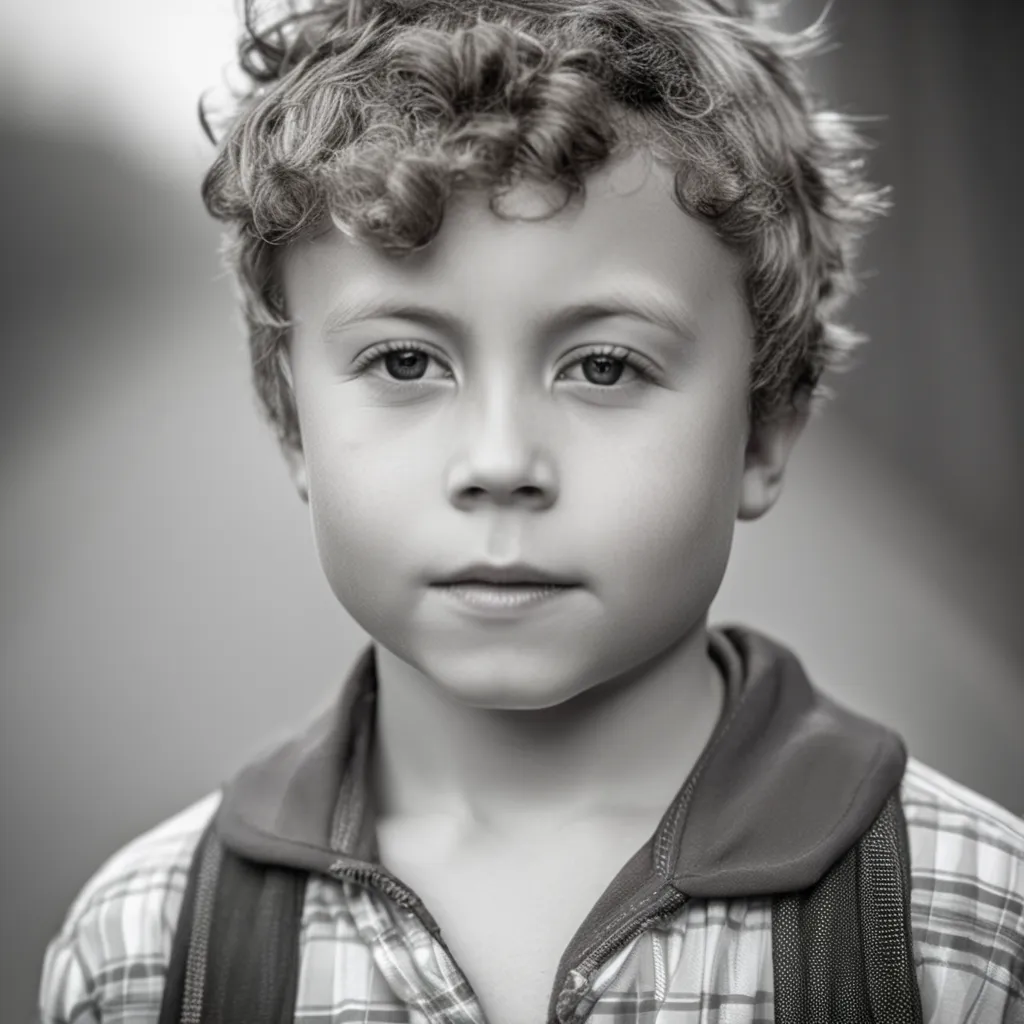

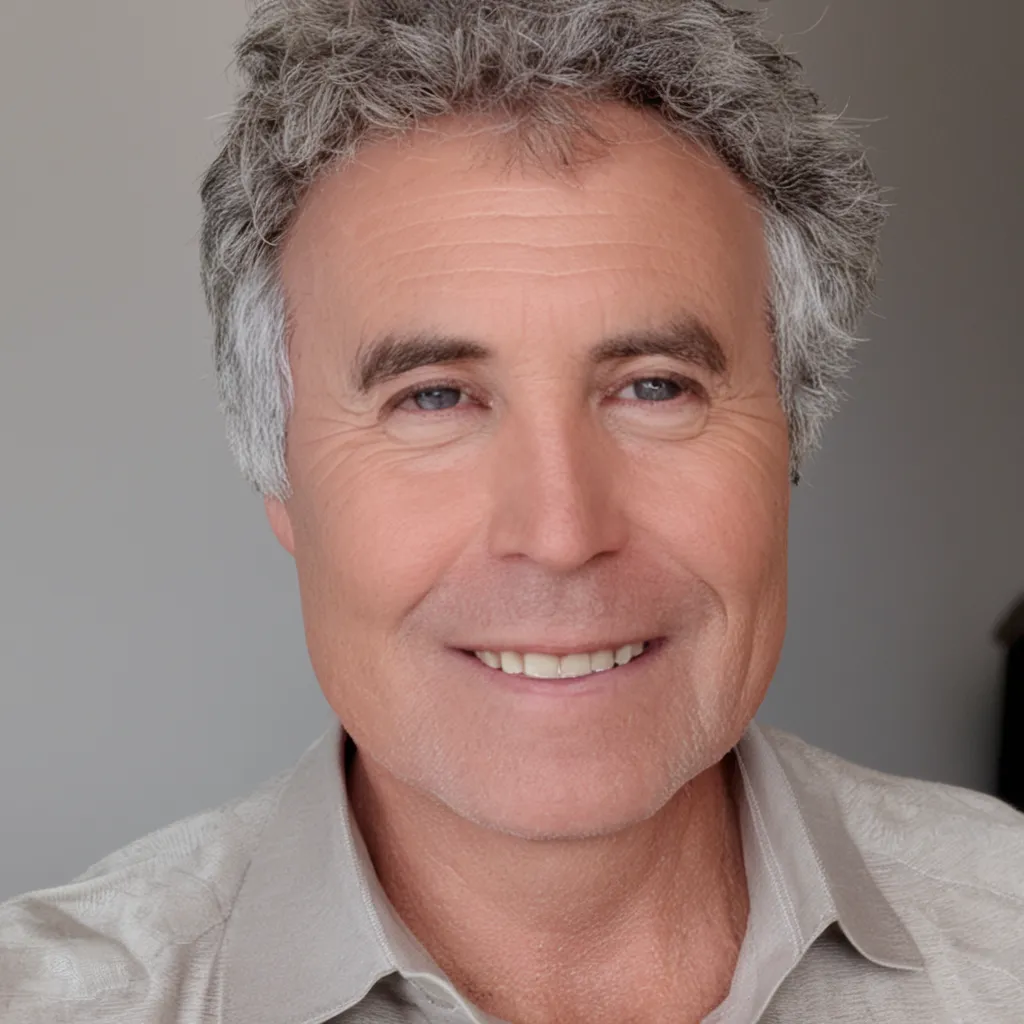

The real power is in the prompt. After the model learned my face, I could experiment with how my facial data is applied. This example shows me interpreted at ages 5 to 80:

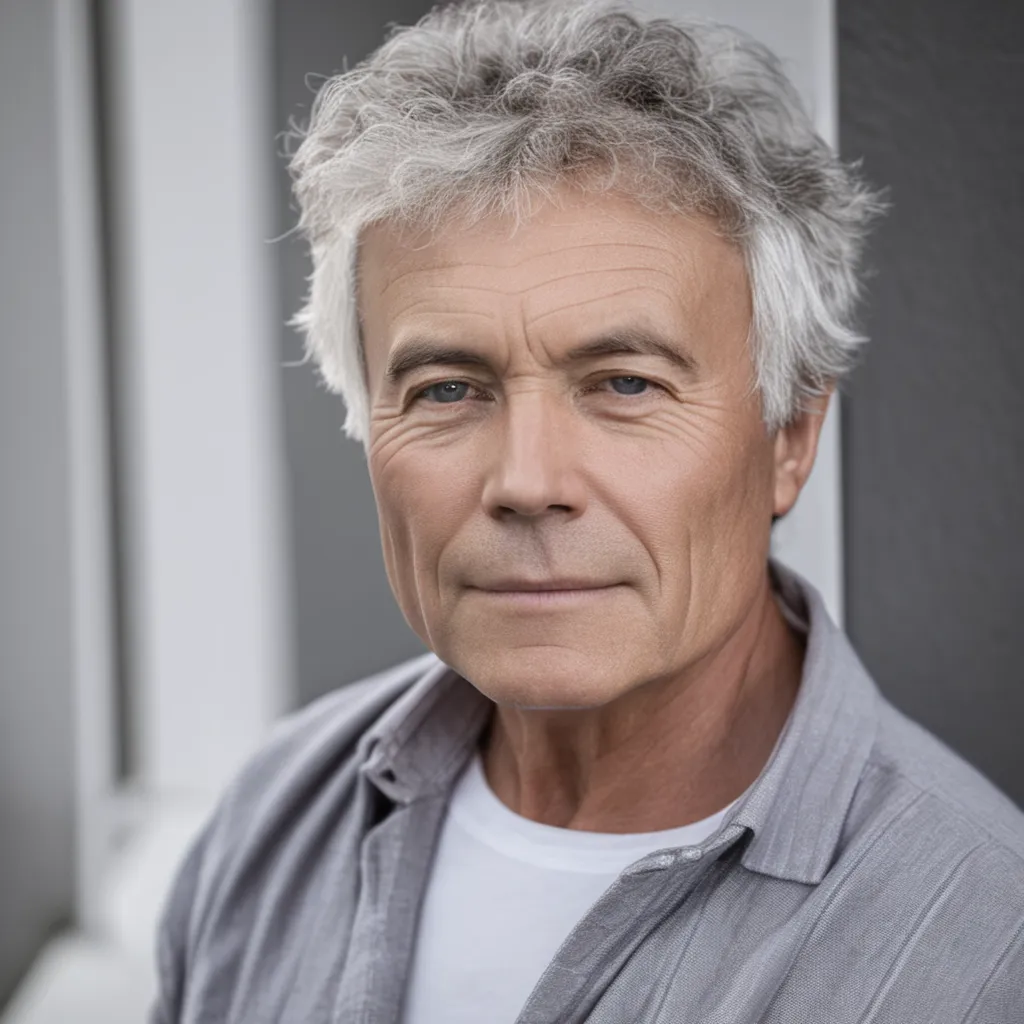

I think ages 10, 18, and 50 are the most accurate, but at 25 and 80 the model drifted. At 80, it generated an elderly woman. With only 39 photos from a narrow age range, the model is falling back on its general knowledge for different ages, which clearly has biases.

This is the thing about AI models that's hard to appreciate until you see it: they are always blending what you taught them with what they already knew. Now that I understood this, I became curious about using a bigger sample set. The fine-tuning is a really thin layer built on top of a really massive foundation. My intuition told me that with a stronger layer, the foundation wouldn't show through as much. 39 photos were enough to teach it my face but they weren't enough to teach it my face in every possible context, so I tried again with more.

Part 2: What 1,559 Photos Changes

Same process. 42x the data.

With the help of Google photos, I amassed 1559 images of myself spanning the years. I then ran the exact same process with a beefier GPU but the exact same model and technique, just overwhelmingly more data. Before the experiment, I was super confident that 42x more data would produce noticeably better results.

| Round 1 | Round 2 | |

|---|---|---|

| Training images | 39 | 1,559 |

| Training steps | 500 | 1,500 |

| GPU | A10G (24GB) | A100 (40GB) |

| LoRA rank | 16 | 32 |

| Training time | ~26 min | ~35 min |

| Cost | ~$0.50 | ~$2.50 |

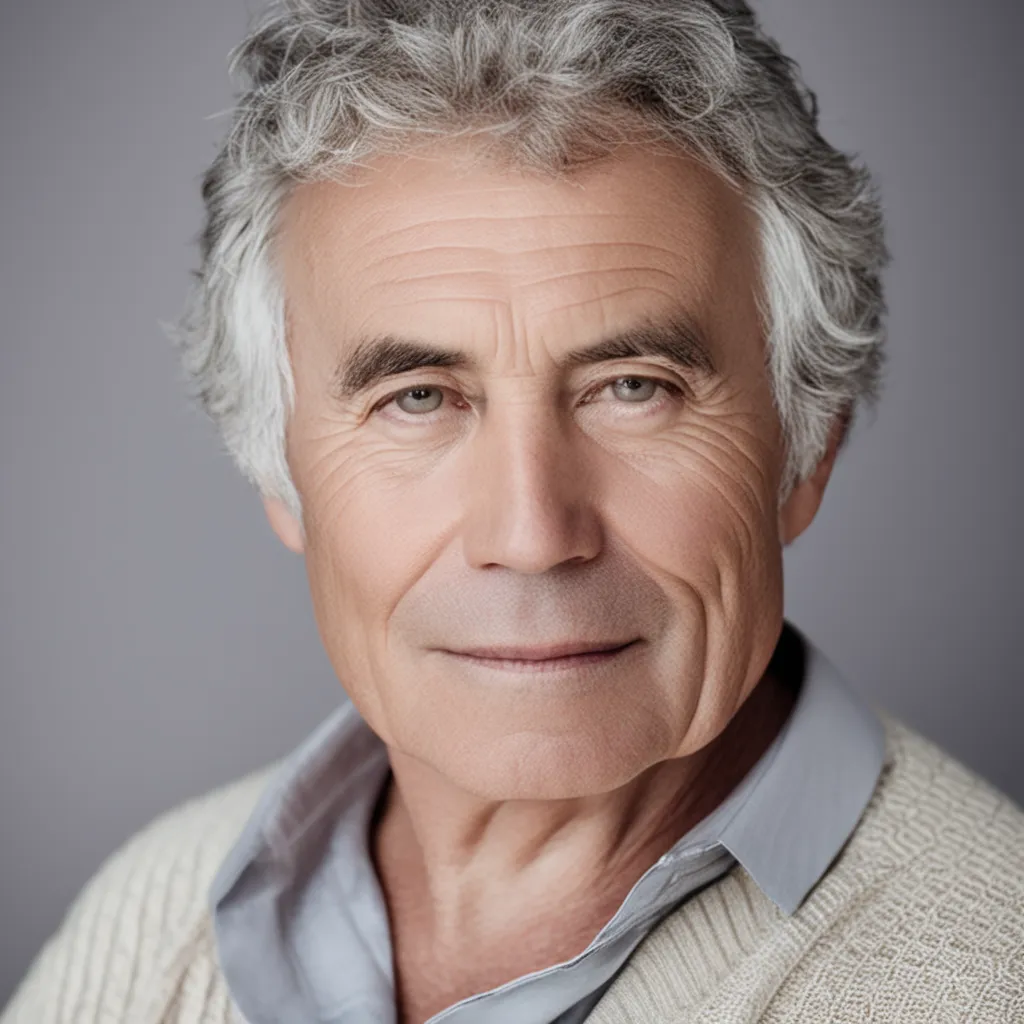

Age progression — with more data

Age progression (1,559 images): 5 to 80

What I learned

I would say the only impressive outcome of the 1559 vs the 39 was the 0.0 image of my face. The model learned my bone structure a lot more accurately. Otherwise I wasn't too impressed. Admittedly, the 1559 images weren't cropped or optimized, so I will probably add an update to this writing after running the same training function with optimized images.

Conclusion:

In AI, temperature is everything. When a frontier model gives you a different answer each time you ask the same question or when an AI music tool generates a surprising melody, that is the temperature being cranked up. The dial between predictable and interesting is a line to ride in and out of the AI world. If life is constantly predictable, things become bland. When there is too much chaos, things fall apart. This is important to remember! A safe version of yourself is always going to be the most generic, whereas the more risks you allow, the more distinctly "you" the results become. I implore you to turn up the temperature a bit on your own life, but not too much :0)

Bibliography

-

Stable Diffusion XL — open-source text-to-image model

by Stability AI

stability.ai/stable-diffusion -

DreamBooth — fine-tuning technique for teaching a

model new subjects from a few images

dreambooth.github.io -

LoRA (Low-Rank Adaptation) — efficient fine-tuning

method that trains a small adapter instead of the full model

huggingface.co/docs/peft/conceptual_guides/adapter#low-rank-adaptation-lora -

Diffusers — Hugging Face library for running and

training diffusion models

huggingface.co/docs/diffusers -

Modal — cloud GPU platform used to train and run

inference

modal.com -

face_recognition — Python library (built on dlib)

used to detect and crop faces from training images

github.com/ageitgey/face_recognition -

PEFT — Hugging Face library for parameter-efficient

fine-tuning

huggingface.co/docs/peft -

Pillow — Python imaging library used for image

preprocessing and resizing

pillow.readthedocs.io