Week four of the Fractal Tech accelerator was about going down the rabbit hole — literally. I set out to build four projects in a single week, each pushing into territory I hadn't touched before. A personalized hypnotic meditation app, an AI-generated mind movie pipeline, a procedurally generated 3D game based on Dante's Divine Comedy, and a browser-based Alice in Wonderland platformer.

Not all of them are finished. But all of them taught me something I didn't expect.

Aletheia: AI-Powered Personalized Meditation

Aletheia is a meditation generator that creates sessions tailored to your actual physical and psychological state. It ingests Oura Ring biometrics, bloodwork results, and personal check-ins, then assembles a six-layer context profile — biometric, biochemical, behavioral, psychological, commitments, and session history — to build deeply personalized hypnotic scripts.

The depth progression system was the interesting part. Sessions deepen as trust builds over time. Early sessions are gentle; later ones push harder. Multi-voice TTS via ElevenLabs gives it an immersive quality, and the whole thing runs privacy-first with SQLite and local Ollama — no data leaves your machine.

What surprised me was how quickly a working version came together. The main limitations weren't the AI — they were the naturalness of voice APIs and how fresh the biomarker data actually is. The bigger question is whether people would actually provide that much personal information. Good proof of concept, though. Could work in a clinical setting.

MindCine: AI-Generated Mind Movies

MindCine is a CLI pipeline that turns your personal life vision into a short visualization video, inspired by Joe Dispenza's mind movie technique. You answer questions about six life areas — health, relationships, career, spiritual growth, environment, finances — and the system generates AI video scenes with affirmation overlays set to binaural audio.

I took a different approach to the affirmations. Instead of generic abundance statements like "I am massively successful," MindCine uses subtraction-style affirmations: "I no longer chase. I trust what's coming." The idea is to remove false layers rather than stack aspirational fantasies on top.

Runway ML handles the video generation, with optional reference photos of yourself for personalization. Scenes are ordered by an emotional arc — building in intensity toward your peak moment. The personalization gap is still real though. Convincing AI-generated results are hard. But honestly, the AI-generated video is potentially better than the stock imagery currently being sold as mind movie products.

Existing mind movie product:

My AI-generated version:

Dante's Inferno: Literature to 3D World

This one was ambitious. A first-person Unity game that procedurally generates the nine circles of Hell from the actual text of Dante's Divine Comedy. The pipeline pulls text from Project Gutenberg, sends it through the Claude API to extract environments, characters, and dialogue as structured JSON, and builds walkable 3D worlds from that data.

Nine distinct circles, each with unique atmosphere, particle effects, and ambient audio. Virgil guides you through. Sinners tell their stories. Boss gates block progression between circles. And there are graceful fallbacks everywhere — no API available? Pre-generated JSON. No 3D model? Capsule placeholder.

The honest reflection: I could not visualize the full development pipeline for this one. 3D game development is opaque and intimidating — there is so much that goes into it. Unity rendering was also a bottleneck on my machine, which led me to pivot the Alice project to Three.js instead.

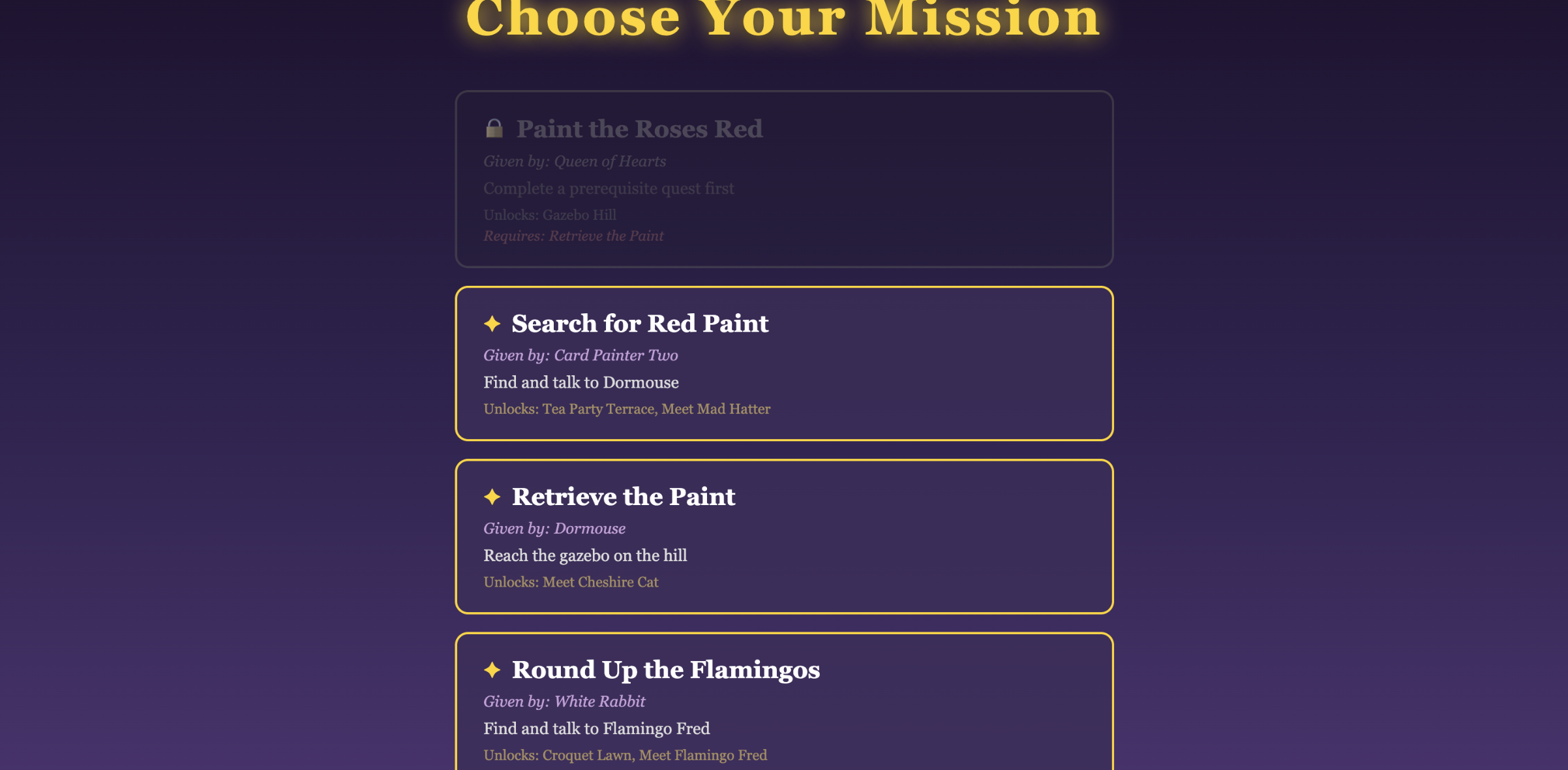

Alice in Wonderland: Browser-Based 3D Platformer

This became the most complete project of the week. A browser-based 3D platformer built with Three.js where Alice explores Wonderland, talks to 25 story characters, collects items, and uses a size-changing mechanic to solve puzzles. The art style is cel-shaded, inspired by Breath of the Wild — custom GLSL shaders and screen-space outlines.

A few things I'm proud of: zero external audio or texture files (everything is procedurally generated), zero runtime API calls (all AI-generated assets are pre-baked at build time), and 25 NPC character models with dialogue pulled from the original book text. The size-changing mechanic — shrink to fit through gaps, grow to break platforms — gives it actual gameplay.

The workflow for this one was wild. The /workflow loop and subagents were impressive. I used Whisper to talk to my computer, and Claude's remote-control feature let me approve work from my phone while I was out running errands. It felt a bit weird being that hands-off. But the results were real.

What I Took Away

A week with four projects in domains I'd never worked in before. Some reflections:

We still need to know things. In the ideal case, AI meets a specialist where they're at. It doesn't replace the understanding — it amplifies it. When I didn't understand something, the AI couldn't paper over that gap.

Thorough PRD and CLAUDE.md files matter. The projects that went smoothest were the ones where I'd written clear requirements and context up front. Refinement of those documents was as important as the code itself.

The workflow loop was the real unlock. Clearing context and going through structured iteration cycles — not just chatting, but actually following a disciplined build loop — made the difference between a mess and a demo.

The bottlenecks were human. Computer power, API credits, and my own understanding were the limiting factors. Not the AI. That's a weird thing to sit with, but it's honest.

A lot of my week involved sitting there waiting for Claude to do its thing. That felt strange. But I'm excited to see what starting a new project from scratch will feel like now that I've internalized these tools.